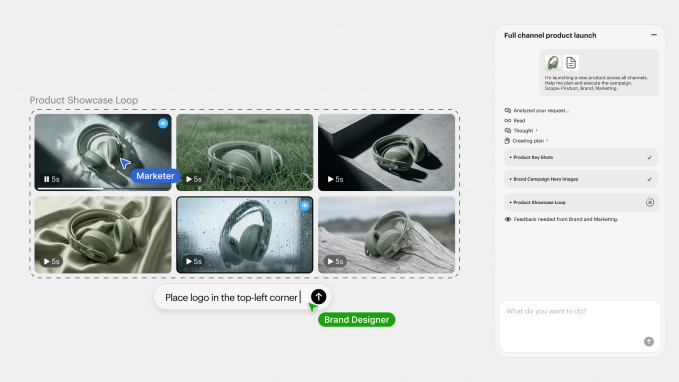

AI video production startup Luma on Thursday launched Luma Agents, designed to handle end-to-end creative work across text, images, video and audio. Luma Agents is powered by the startup’s Unified Intelligence model family, with an architecture trained on a single multi-modal reasoning system.

Luma Agents is being introduced as a new way of doing work for ad agencies, marketing teams, design studios, and enterprises. Luma says its agents are able to plan and create text, images, video and audio while coordinating with other AI models, including Luma’s Ray 3.14, Google’s Veo 3, Nano Banana Pro, ByteDance’s Seedream and ElevenLabs’ voice models.

Luma agents are built on the startup’s Uni-1 model, the first in the Unified Intelligence family of AI models. It has been trained in audio, video, image, language and spatial thinking, according to Amit Jain, CEO and co-founder of Luma.

Jain told TechCrunch that the Uni-1 model can “think in language, visualize and display in pixels or images… We call it ‘pixel intelligence.’” He added that other output capabilities such as audio and video will come in later model versions.

“Our customers are not buying the tool, they are reshaping the way business is done,” Jain said.

Luma has already begun rolling out its new agent platform with existing clients including global advertising agencies Publicis Groupe and Serviceplan, as well as brands such as Adidas, Mazda and Saudi AI company Humain.

Luma agents are game-changers because they are able to maintain continuous context across assets, collaborators and creative iterations, Jain said. They can also evaluate and improve outputs, and improve their results through frequent self-criticism, according to Jain.

TechCrunch event

San Francisco, California

|

October 13-15, 2026

This kind of ability to verify your work is what made programming agents so useful, Jain said. “You need that ability to evaluate your work, fix it, and go through that loop until the solution is good and accurate.”

Jain said the current workflow for using AI tools in creative environments does not have the same acceleration in benefits that people in the creative industry expect from AI. Instead, it’s more like: “Here are 100 role models. Learn how to motivate them,” he said.

“With unified intelligence, because these models understand as well as produce, we are able to build a system capable of doing this kind of end-to-end work,” Jain said.

Take, for example, a human architect drawing a building. When they draw lines, they create an internal mental representation of structure, light, spatial dynamics, and lived experience. Jain says this is the same principle on which unified intelligence was built.

Jain said the system can significantly speed up creative workflow. In a demo, Jain showed how a 200-word and image summary of a product (a piece of lipstick) prompted the system to generate various ideas for locations, models and color schemes for an ad campaign.

What makes Luma Agents different, he said, is that you don’t need to prompt back and forth for each iteration on an image or idea — the system instead creates large sets of variations and lets users guide direction throughout the conversation.

In another example, Jain said Luma Agents turned a $15 million year-long brand campaign into multiple local ads for different countries in 40 hours for less than $20,000, bypassing the brand’s internal quality controls and accuracy checks.

While Luma Agents is now publicly available via an application programming interface (API), Jain said the startup plans to gradually roll out access to ensure users maintain reliable access and avoid workflow interruptions.